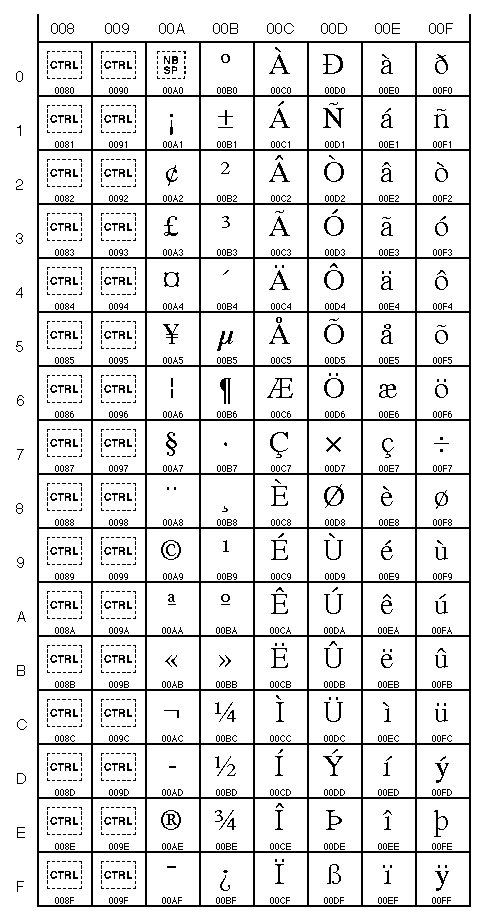

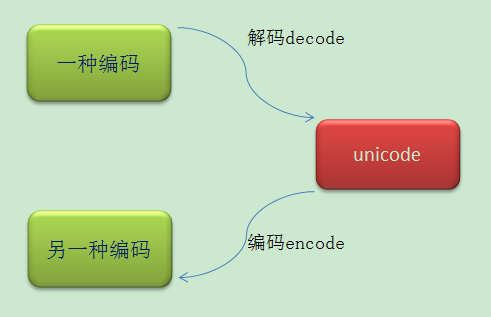

When we run function tf_split_punct1 separately to remove punctuation from English text it gives same English text without punctuation but when we do the same to remove punctuation from Devanagari or Sharada text it gives this: tf. The run-time character set depends on the I/O devices connected to the program but is generally a superset of ASCII. New in version 2.3: An encoding declaration can be used to indicate that string literals and comments use an encoding different from ASCII. Here textfile contains Sharada script as source and Devanagari script as target. Python uses the 7-bit ASCII character set for program text. UnicodeDecodeError: 'utf-8' codec can't decode bytes in position 15-16: unexpected end of data. When this code is used in machine translation using transformer model then it gives the error : Standardize=tf_lower_and_split_punct2, max_tokens=TARGET_VOCAB_SIZE, split="whitespace" Print(" adapting the target text processor on the target dataset.") # create target text processing layer and adapt on the training Standardize=tf_lower_and_split_punct1, max_tokens=SOURCE_VOCAB_SIZE, split="whitespace" Print(" adapting the source text processor on the source dataset.") # create source text processing layer and adapt on the training (train, val, test) = splitting_dataset(source=source, target=target) Print(" splitting the dataset into train, val, and test.") (source, target) = load_data(fname=DATA_FNAME) #text = tf.strings.unicode_decode(text, input_encoding='UTF-8') Text = tf.strings.regex_replace(text, '॥', '') Text = tf.strings.join(", text, ""], separator=" ") # text = tf.strings.unicode_decode(text, input_encoding='UTF-8') Text = tf.strings.regex_replace(text, '', '')

Text = tf.strings.regex_replace(text, '□', '') Text = tf.strings.regex_replace(text, '□', '')

Text = tf.strings.regex_replace(text, "", "") Text = tf_text.normalize_utf8(text, "NFKD") (trainSource, trainTarget) = (source, target) # split the inputs into train, val, and test On decoding, an optional UTF-8 encoded BOM at the start of the data will be skipped. For the stateful encoder this is only done once (on the first write to the byte stream). On encoding, a UTF-8 encoded BOM will be prepended to the UTF-8 encoded bytes. # calculate the training and validation size This module implements a variant of the UTF-8 codec. # return the list of source and target sentences # collect the source sentences and target sentences into # the individual source and target sentence pairs # iterate over each line and split the sentences to get # the source and the target sentence is demarcated with tab, With open(fname, "r", encoding="utf-8",errors= 'ignore') as textFile:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed